ELF2开发板(飞凌嵌入式)部署yolov5s的自定义模型`

本人将零基础教学自己训练的yolov5s模型部署于飞凌的elf2开发板,利用RKNN-Toolkit2对模型进行转化为rknn模型,在开发板上进行推理。

获得自定义训练得到的yolov5s pt模型

准备自定义数据集(博主用的是VOC数据集)

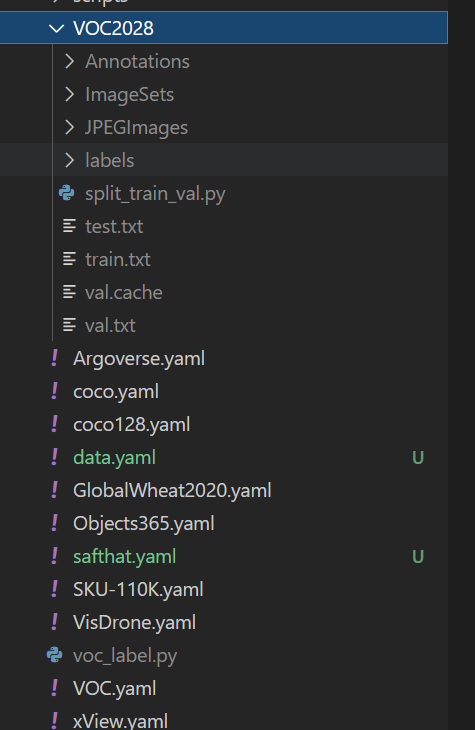

└─VOC2028: 自定义数据集

├─Annotations 存放的是数据集标签文件,xml格式

├─ImageSets 数据集的划分文件

│ └─Main

├─JPEGImages 存放的是数据集图片

在split_train_val.py文件路径下执行python3 split_train_val.py会得到一下目录结构:

└─VOC2028: 自定义数据集

├─Annotations 存放的是数据集标签文件,xml格式

├─ImageSets 数据集的划分文件

│ └─Main test.txt

└─test.txt

└─train.txt

└─val.txt

├─JPEGImages 存放的是数据集图片

├─split_train_val.py 分割数据集的py文件

split_train_val.py文件代码如下:

"""

Author:dragonforward

简介:分训练集、验证集和测试集,按照 8:1:1 的比例来分,训练集8,验证集1,测试集1

"""

import os

import random

import argparse

parser = argparse.ArgumentParser()

parser.add_argument('--xml_path', default='Annotations/', type=str, help='input xml label path')

parser.add_argument('--txt_path', default='ImageSets/Main/', type=str, help='output txt label path')

opt = parser.parse_args()

train_percent = 0.8

val_percent = 0.1

test_persent = 0.1

xmlfilepath = opt.xml_path

txtsavepath = opt.txt_path

total_xml = os.listdir(xmlfilepath)

if not os.path.exists(txtsavepath):

os.makedirs(txtsavepath)

num = len(total_xml)

list = list(range(num))

t_train = int(num * train_percent)

t_val = int(num * val_percent)

train = random.sample(list, t_train)

num1 = len(train)

for i in range(num1):

list.remove(train[i])

val_test = [i for i in list if not i in train]

val = random.sample(val_test, t_val)

num2 = len(val)

for i in range(num2):

list.remove(val[i])

file_train = open(txtsavepath + '/train.txt', 'w')

file_val = open(txtsavepath + '/val.txt', 'w')

file_test = open(txtsavepath + '/test.txt', 'w')

for i in train:

name = total_xml[i][:-4] + '\n'

file_train.write(name)

for i in val:

name = total_xml[i][:-4] + '\n'

file_val.write(name)

for i in list:

name = total_xml[i][:-4] + '\n'

file_test.write(name)

file_train.close()

file_val.close()

file_test.close()

目录结构如下:

└─VOC2028: 自定义数据集

├─Annotations 存放的是数据集标签文件,xml格式

├─ImageSets 数据集的划分文件

│ └─Main

├─JPEGImages 存放的是数据集图片

└─labels yolov5将此文件夹当作训练的标注文件夹

└─voc_label.py

voc_label.py文件代码如下:

# -*- coding: utf-8 -*-

import xml.etree.ElementTree as ET

import os

sets = ['train', 'val', 'test'] # 如果你的Main文件夹没有test.txt,就删掉'test'

classes = ["hat", "people"] # 改成自己的类别,VOC数据集有以下20类别

# classes = ["brickwork", "coil","rebar"] # 改成自己的类别,VOC数据集有以下20类别

# classes = ["aeroplane", 'bicycle', 'bird', 'boat', 'bottle', 'bus', 'car', 'cat', 'chair', 'cow', 'diningtable', 'dog',

# 'horse', 'motorbike', 'person', 'pottedplant', 'sheep', 'sofa', 'train', 'tvmonitor'] # class names

# abs_path = os.getcwd() /root/yolov5/data/voc_label.py

abs_path = '/root/yolov5/data/'

def convert(size, box):

dw = 1. / (size[0])

dh = 1. / (size[1])

x = (box[0] + box[1]) / 2.0 - 1

y = (box[2] + box[3]) / 2.0 - 1

w = box[1] - box[0]

h = box[3] - box[2]

x = x * dw

w = w * dw

y = y * dh

h = h * dh

return x, y, w, h

def convert_annotation(image_id):

in_file = open(abs_path + '/VOC2028/Annotations/%s.xml' % (image_id), encoding='UTF-8')

out_file = open(abs_path + '/VOC2028/labels/%s.txt' % (image_id), 'w')

tree = ET.parse(in_file)

root = tree.getroot()

size = root.find('size')

w = int(size.find('width').text)

h = int(size.find('height').text)

for obj in root.iter('object'):

difficult = obj.find('difficult').text

# difficult = obj.find('Difficult').text

cls = obj.find('name').text

if cls not in classes or int(difficult) == 1:

continue

cls_id = classes.index(cls)

xmlbox = obj.find('bndbox')

b = (float(xmlbox.find('xmin').text), float(xmlbox.find('xmax').text), float(xmlbox.find('ymin').text),

float(xmlbox.find('ymax').text))

b1, b2, b3, b4 = b

# 标注越界修正

if b2 > w:

b2 = w

if b4 > h:

b4 = h

b = (b1, b2, b3, b4)

bb = convert((w, h), b)

out_file.write(str(cls_id) + " " + " ".join([str(a) for a in bb]) + '\n')

for image_set in sets:

if not os.path.exists(abs_path + '/VOC2028/labels/'):

os.makedirs(abs_path + '/VOC2028/labels/')

image_ids = open(abs_path + '/VOC2028/ImageSets/Main/%s.txt' % (image_set)).read().strip().split()

list_file = open(abs_path + '/VOC2028/%s.txt' % (image_set), 'w')

for image_id in image_ids:

list_file.write(abs_path + '/VOC2028/JPEGImages/%s.jpg\n' % (image_id)) # 要么自己补全路径,只写一半可能会报错

convert_annotation(image_id)

list_file.close()

图1 文件列表图

训练模型

git clone https://github.com/ultralytics/yolov5

cd yolov5

pip install -r requirements.txt

pip install onnx

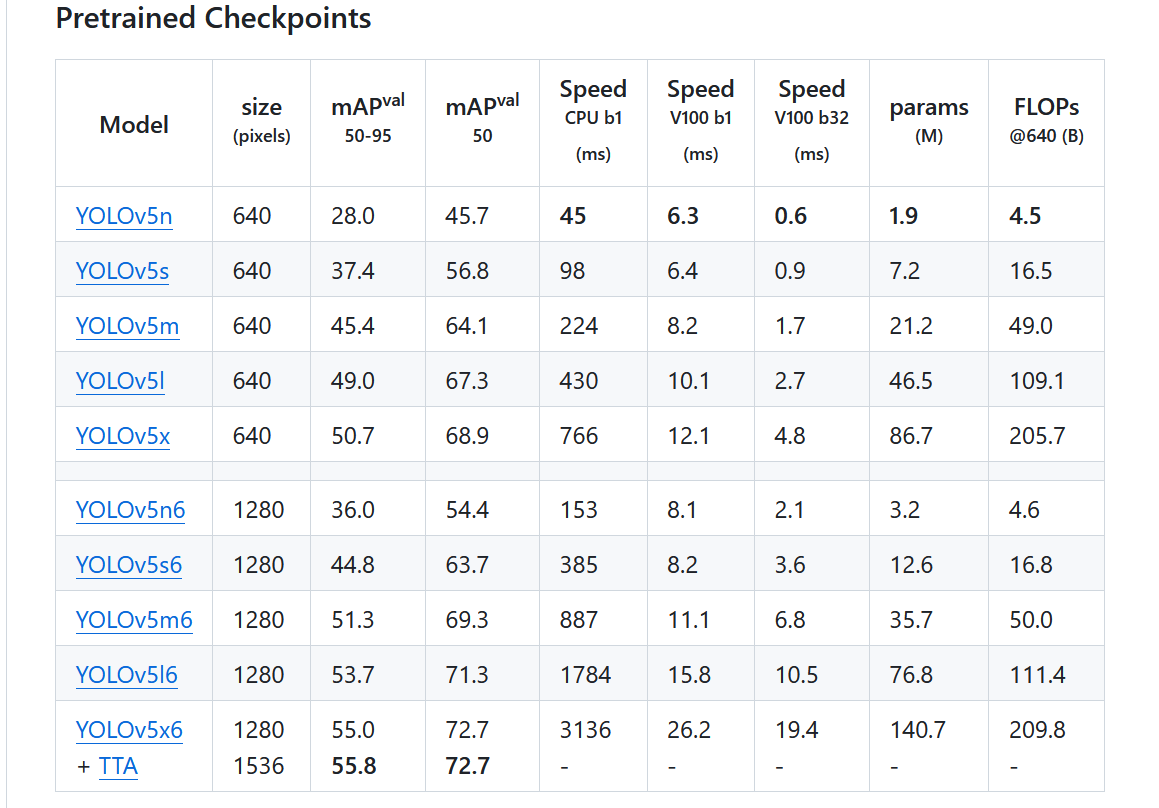

- 下载预训练权重(博主尝试了v7.0的和v6.0的pt都可以)

https://github.com/ultralytics/yolov5/releases/download/v7.0/yolov5s.pt

图2 官方模型pt图

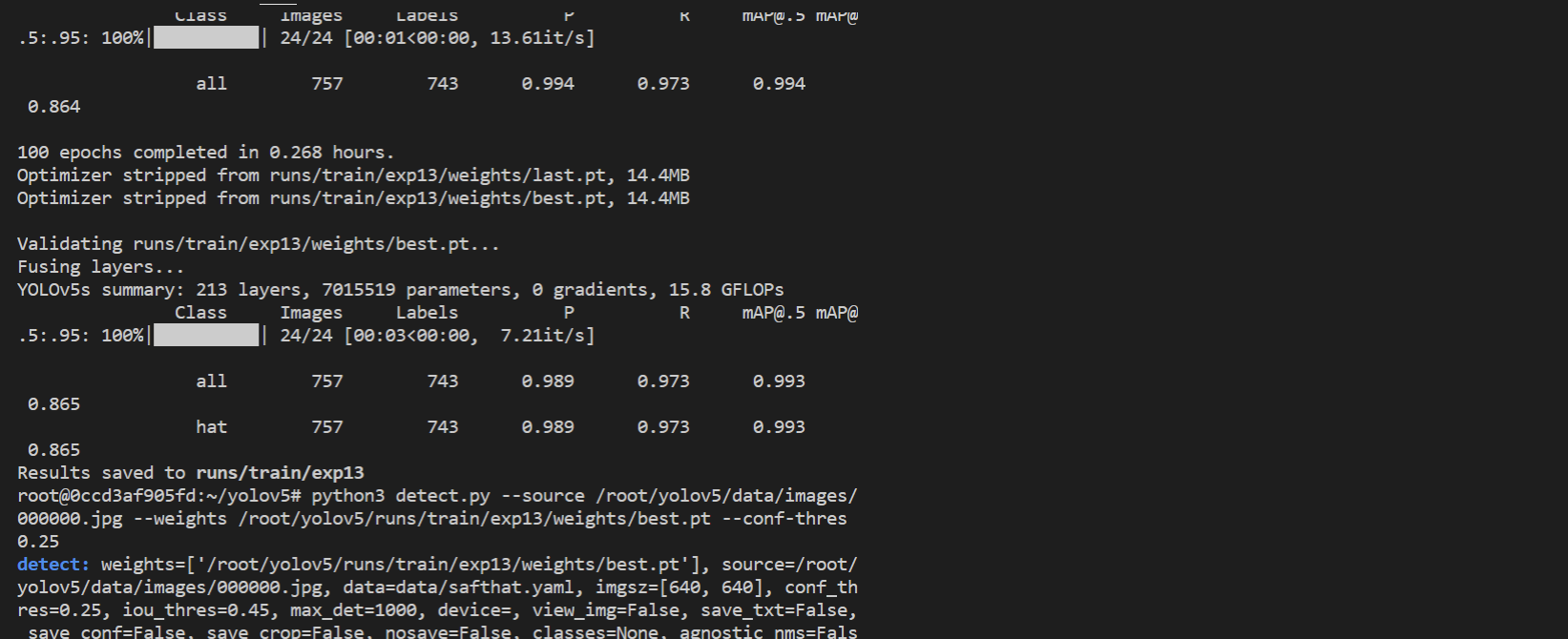

python3 train.py --weights weights/yolov5s.pt --cfg models/yolov5s.yaml --data data/safthat.yaml --epochs 150 --batch-size 16 --multi-scale --device 0

图3 模型训练

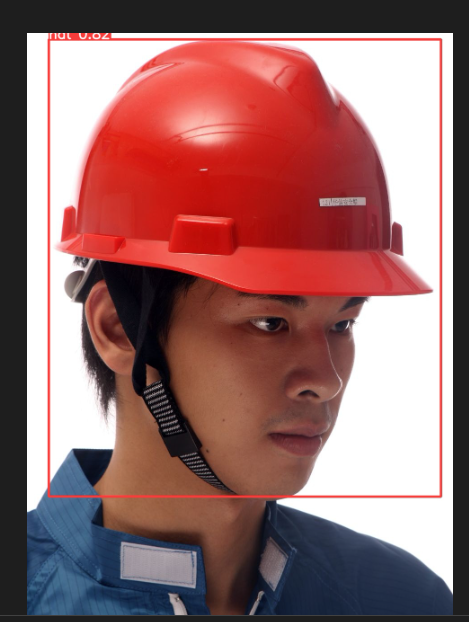

python3 detect.py --source /root/yolov5/data/images/000000.jpg --weights /root/yolov5/runs/train/exp13/weights/best.pt --conf-thres 0.25

图4 安全帽模型测试

自定义yolov5s pt模型进行转换(干货)

下载瑞芯微官方修改过的yolov5以及环境搭建

本人使用的是conda进行的处理,首先先拉取仓库,然后安装conda(可以参考该文章),我使用的是python3.8。

具体执行:

git clone https:

(www) C:\Users\wxw>cd C:\Users\wxw\PycharmProjects\yolov5

在conda终端配置镜像源

conda config --remove-key channels

conda config --add channels https:

conda config --add channels https:

conda config --add channels https:

conda config --set show_channel_urls yes

pip config set global.index-url https:

(www) C:\Users\wxw\PycharmProjects\yolov5>pip install -r requirements.txt

输出结果成功安装:

Successfully installed MarkupSafe-2.1.5 Pillow-10.4.0 PyYAML-6.0.2 absl-py-2.1.0 asttokens-3.0.0 backcall-0.2.0 cachetools-5.5.1 certifi-2025.1.31 charset-normalizer-3.4.1 colorama-0.4.6 contourpy-1.1.1 cycler-0.12.1 decorator-5.1.1 executing-2.2.0 filelock-3.16.1 fonttools-4.55.8 fsspec-2025.2.0 gitdb-4.0.12 gitpython-3.1.44 google-auth-2.38.0 google-auth-oauthlib-1.0.0 grpcio-1.70.0 idna-3.10 importlib-metadata-8.5.0 importlib-resources-6.4.5 ipython-8.12.3 jedi-0.19.2 jinja2-3.1.5 kiwisolver-1.4.7 markdown-3.7 matplotlib-3.7.5 matplotlib-inline-0.1.7 mpmath-1.3.0 networkx-3.1 numpy-1.24.4 oauthlib-3.2.2 opencv-python-4.11.0.86 packaging-24.2 pandas-2.0.3 parso-0.8.4 pickleshare-0.7.5 prompt-toolkit-3.0.50 protobuf-5.29.3 psutil-6.1.1 pure-eval-0.2.3 pyasn1-0.6.1 pyasn1-modules-0.4.1 pygments-2.19.1 pyparsing-3.1.4 python-dateutil-2.9.0.post0 pytz-2025.1 requests-2.32.3 requests-oauthlib-2.0.0 rsa-4.9 scipy-1.10.1 seaborn-0.13.2 six-1.17.0 smmap-5.0.2 stack-data-0.6.3 sympy-1.13.3 tensorboard-2.14.0 tensorboard-data-server-0.7.2 thop-0.1.1.post2209072238 torch-2.4.1 torchvision-0.19.1 tqdm-4.67.1 traitlets-5.14.3 typing-extensions-4.12.2 tzdata-2025.1 urllib3-2.2.3 wcwidth-0.2.13 werkzeug-3.0.6 zipp-3.20.2

图5 conda ui界面

模型转换为onnx(和官方yolov5不同所以得用瑞芯微修改后的yolov5)

conda终端执行命令

python export.py

输出结果如下,可以看到有一个报错ONNX: export failure 23.0s: DLL load failed while importing onnx_cpp2py_export: (DLL),该报错的解决办法可以将版本降低为1.61.1即可解决,最终得到优化后的onnx文件。

(www) C:\Users\wxw\PycharmProjects\yolov5>python export.py --rknpu --weight yolov5s_hat.pt

export: data=C:\Users\wxw\PycharmProjects\yolov5\data\coco128.yaml, weights=['yolov5s_hat.pt'], imgsz=[640, 640], batch_size=1, device=cpu, half=False, inplace=False, keras=False, optimize=False, int8=False, dynamic=False, simplify=False, opset=12, verbose=False, workspace=4, nms=False, agnostic_nms=False, topk_per_class=100, topk_all=100, iou_thres=0.45, conf_thres=0.25, include=['onnx'], rknpu=True

YOLOv5 2023-12-19 Python-3.8.20 torch-2.4.1+cpu CPU

C:\Users\wxw\PycharmProjects\yolov5\models\experimental.py:80: FutureWarning: You are using `torch.load` with `weights_only=False` (the current default value), which uses the default pickle module implicitly. It is possible to construct malicious pickle data which will execute arbitrary code during unpickling (See https:

ckpt = torch.load(attempt_download(w), map_location='cpu') # load

Fusing layers...

YOLOv5s summary: 213 layers, 7015519 parameters, 0 gradients, 15.8 GFLOPs

---> save anchors for RKNN

[[10.0, 13.0], [16.0, 30.0], [33.0, 23.0], [30.0, 61.0], [62.0, 45.0], [59.0, 119.0], [116.0, 90.0], [156.0, 198.0], [373.0, 326.0]]

export detect model for RKNPU

PyTorch: starting from yolov5s_hat.pt with output shape (1, 21, 80, 80) (13.7 MB)

requirements: YOLOv5 requirement "onnx" not found, attempting AutoUpdate...

Looking in indexes: https:

Collecting onnx

Using cached https:

Requirement already satisfied: numpy>=1.20 in c:\users\wxw\.conda\envs\www\lib\site-packages (from onnx) (1.24.4)

Requirement already satisfied: protobuf>=3.20.2 in c:\users\wxw\.conda\envs\www\lib\site-packages (from onnx) (5.29.3)

Installing collected packages: onnx

Successfully installed onnx-1.17.0

requirements: 1 package updated per ['onnx']

requirements: Restart runtime or rerun command for updates to take effect

ONNX: export failure 23.0s: DLL load failed while importing onnx_cpp2py_export: (DLL)

(www) C:\Users\wxw\PycharmProjects\yolov5>pip install onnx==1.16.1

Looking in indexes: https:

Collecting onnx==1.16.1

Downloading https:

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 14.4/14.4 MB 20.2 MB/s eta 0:00:00

Requirement already satisfied: numpy>=1.20 in c:\users\wxw\.conda\envs\www\lib\site-packages (from onnx==1.16.1) (1.24.4)

Requirement already satisfied: protobuf>=3.20.2 in c:\users\wxw\.conda\envs\www\lib\site-packages (from onnx==1.16.1) (5.29.3)

Installing collected packages: onnx

Attempting uninstall: onnx

Found existing installation: onnx 1.17.0

Uninstalling onnx-1.17.0:

Successfully uninstalled onnx-1.17.0

Successfully installed onnx-1.16.1

(www) C:\Users\wxw\PycharmProjects\yolov5>python export.py --rknpu --weight yolov5s_hat.pt

export: data=C:\Users\wxw\PycharmProjects\yolov5\data\coco128.yaml, weights=['yolov5s_hat.pt'], imgsz=[640, 640], batch_size=1, device=cpu, half=False, inplace=False, keras=False, optimize=False, int8=False, dynamic=False, simplify=False, opset=12, verbose=False, workspace=4, nms=False, agnostic_nms=False, topk_per_class=100, topk_all=100, iou_thres=0.45, conf_thres=0.25, include=['onnx'], rknpu=True

YOLOv5 2023-12-19 Python-3.8.20 torch-2.4.1+cpu CPU

C:\Users\wxw\PycharmProjects\yolov5\models\experimental.py:80: FutureWarning: You are using `torch.load` with `weights_only=False` (the current default value), which uses the default pickle module implicitly. It is possible to construct malicious pickle data which will execute arbitrary code during unpickling (See https:

ckpt = torch.load(attempt_download(w), map_location='cpu') # load

Fusing layers...

YOLOv5s summary: 213 layers, 7015519 parameters, 0 gradients, 15.8 GFLOPs

---> save anchors for RKNN

[[10.0, 13.0], [16.0, 30.0], [33.0, 23.0], [30.0, 61.0], [62.0, 45.0], [59.0, 119.0], [116.0, 90.0], [156.0, 198.0], [373.0, 326.0]]

export detect model for RKNPU

PyTorch: starting from yolov5s_hat.pt with output shape (1, 21, 80, 80) (13.7 MB)

ONNX: starting export with onnx 1.16.1...

ONNX: export success 1.2s, saved as yolov5s_hat.onnx (26.8 MB)

Export complete (2.2s)

Results saved to C:\Users\wxw\PycharmProjects\yolov5

Detect: python detect.py --weights yolov5s_hat.onnx

Validate: python val.py --weights yolov5s_hat.onnx

PyTorch Hub: model = torch.hub.load('ultralytics/yolov5', 'custom', 'yolov5s_hat.onnx')

Visualize: https:

(www) C:\Users\wxw\PycharmProjects\yolov5>

优化后的明显比之前的算子优化了很多。

图6 模型onnx图

准备模型转换需要的文件

hat_subset.txt文件内容如下:

./subset/000032.jpg

./subset/000036.jpg

./subset/000076.jpg

./subset/000083.jpg

图7 资源列表图

- 准备模型与标签

将上文得到的onnx模型放到model路径下,以及修改标签文件,准备一张安全帽的测试图片

hat_label_list.txt内容如下:

hat

person

图8 模型标签文件列表图

- 修改生成的编译脚本

要修改cmakelist其中的路径,cpp文件夹中postprocess的cc文件和h文件的类别,修改convert.py文件中数据集路径和生成rknn模型路径,如下图

图9 修改1

图10 修改2

图11 修改3

图12 修改4

量化转化得到rknn模型以及编译得到可执行文件

root@5c542f109d4c:/mnt/rknn_model_zoo-2.1.0/examples/yolov5/python# python3 ./convert.py ../model/yolov5s_hat.onnx rk3588

I rknn-toolkit2 version: 2.1.0+708089d1

--> Config model

done

--> Loading model

I Loading : 100%|██████████████████████████████████████████████| 121/121 [00:00<00:00, 11037.64it/s]

done

--> Building model

I OpFusing 0: 100%|██████████████████████████████████████████████| 100/100 [00:00<00:00, 358.08it/s]

I OpFusing 1 : 100%|█████████████████████████████████████████████| 100/100 [00:00<00:00, 269.36it/s]

I OpFusing 2 : 100%|██████████████████████████████████████████████| 100/100 [00:01<00:00, 77.88it/s]

I GraphPreparing : 100%|████████████████████████████████████████| 149/149 [00:00<00:00, 3244.53it/s]

I Quantizating : 100%|████████████████████████████████████████████| 149/149 [00:57<00:00, 2.60it/s]

W build: The default input dtype of 'images' is changed from 'float32' to 'int8' in rknn model for performance!

Please take care of this change when deploy rknn model with Runtime API!

W build: The default output dtype of 'output0' is changed from 'float32' to 'int8' in rknn model for performance!

Please take care of this change when deploy rknn model with Runtime API!

W build: The default output dtype of '367' is changed from 'float32' to 'int8' in rknn model for performance!

Please take care of this change when deploy rknn model with Runtime API!

W build: The default output dtype of '369' is changed from 'float32' to 'int8' in rknn model for performance!

Please take care of this change when deploy rknn model with Runtime API!

I rknn building ...

I rknn buiding done.

done

--> Export rknn model

done

执行和输出结果:

root@5c542f109d4c:/mnt/rknn_model_zoo-2.1.0# ./build-linux.sh -t rk3588 -a aarch64 -d yolov5

./build-linux.sh -t rk3588 -a aarch64 -d yolov5

/mnt/gcc-linaro-7.5.0-2019.12-x86_64_aarch64-linux-gnu/bin/aarch64-linux-gnu

============================================================================

BUILD_DEMO_NAME=yolov5

BUILD_DEMO_PATH=examples/yolov5/cpp

TARGET_SOC=rk3588

TARGET_ARCH=aarch64

BUILD_TYPE=Release

ENABLE_ASAN=OFF

INSTALL_DIR=/mnt/rknn_model_zoo-2.1.0/install/rk3588_linux_aarch64/rknn_yolov5_demo

BUILD_DIR=/mnt/rknn_model_zoo-2.1.0/build/build_rknn_yolov5_demo_rk3588_linux_aarch64_Release

CC=/mnt/gcc-linaro-7.5.0-2019.12-x86_64_aarch64-linux-gnu/bin/aarch64-linux-gnu-gcc

CXX=/mnt/gcc-linaro-7.5.0-2019.12-x86_64_aarch64-linux-gnu/bin/aarch64-linux-gnu-g++

====================================================================================

-- Configuring done

-- Generating done

-- Build files have been written to: /mnt/rknn_model_zoo-2.1.0/build/build_rknn_yolov5_demo_rk3588_linux_aarch64_Release

[ 33%] Built target audioutils

[ 33%] Built target fileutils

[ 50%] Built target imageutils

[ 66%] Built target imagedrawing

Scanning dependencies of target rknn_yolov5_demo

[ 83%] Building CXX object CMakeFiles/rknn_yolov5_demo.dir/rknpu2/yolov5.cc.o

[ 83%] Building CXX object CMakeFiles/rknn_yolov5_demo.dir/main.cc.o

[ 91%] Building CXX object CMakeFiles/rknn_yolov5_demo.dir/postprocess.cc.o

/mnt/rknn_model_zoo-2.1.0/examples/yolov5/cpp/postprocess.cc: In function 'char* coco_cls_to_name(int)':

/mnt/rknn_model_zoo-2.1.0/examples/yolov5/cpp/postprocess.cc:577:16: warning: ISO C++ forbids converting a string constant to 'char*' [-Wwrite-strings]

return "null";

^~~~~~

/mnt/rknn_model_zoo-2.1.0/examples/yolov5/cpp/postprocess.cc:585:12: warning: ISO C++ forbids converting a string constant to 'char*' [-Wwrite-strings]

return "null";

^~~~~~

[100%] Linking CXX executable rknn_yolov5_demo

[100%] Built target rknn_yolov5_demo

[ 16%] Built target fileutils

[ 33%] Built target imageutils

[ 50%] Built target imagedrawing

[ 83%] Built target rknn_yolov5_demo

[100%] Built target audioutils

Install the project...

-- Install configuration: "Release"

-- Installing: /mnt/rknn_model_zoo-2.1.0/install/rk3588_linux_aarch64/rknn_yolov5_demo/./rknn_yolov5_demo

-- Set runtime path of "/mnt/rknn_model_zoo-2.1.0/install/rk3588_linux_aarch64/rknn_yolov5_demo/./rknn_yolov5_demo" to "$ORIGIN/lib"

-- Installing: /mnt/rknn_model_zoo-2.1.0/install/rk3588_linux_aarch64/rknn_yolov5_demo/./model/hat.jpg

-- Installing: /mnt/rknn_model_zoo-2.1.0/install/rk3588_linux_aarch64/rknn_yolov5_demo/./model/hat_label_list.txt

-- Installing: /mnt/rknn_model_zoo-2.1.0/install/rk3588_linux_aarch64/rknn_yolov5_demo/model/yolov5.rknn

-- Installing: /mnt/rknn_model_zoo-2.1.0/install/rk3588_linux_aarch64/rknn_yolov5_demo/model/yolov5_hat.rknn

-- Installing: /mnt/rknn_model_zoo-2.1.0/install/rk3588_linux_aarch64/rknn_yolov5_demo/lib/librknnrt.so

-- Installing: /mnt/rknn_model_zoo-2.1.0/install/rk3588_linux_aarch64/rknn_yolov5_demo/lib/librga.so

root@5c542f109d4c:/mnt/rknn_model_zoo-2.1.0# cd install/

root@5c542f109d4c:/mnt/rknn_model_zoo-2.1.0/install# ls

rk3588_linux_aarch64

root@5c542f109d4c:/mnt/rknn_model_zoo-2.1.0/install# cd rk3588_linux_aarch64/

root@5c542f109d4c:/mnt/rknn_model_zoo-2.1.0/install/rk3588_linux_aarch64# ls

rknn_yolov5_demo rknn_yolov5_demo_hat_my.tar.gz

root@5c542f109d4c:/mnt/rknn_model_zoo-2.1.0/install/rk3588_linux_aarch64# cd rknn_yolov5_demo

root@5c542f109d4c:/mnt/rknn_model_zoo-2.1.0/install/rk3588_linux_aarch64/rknn_yolov5_demo# ls

lib model rknn_yolov5_demo

root@5c542f109d4c:/mnt/rknn_model_zoo-2.1.0/install/rk3588_linux_aarch64/rknn_yolov5_demo# ls model/

hat.jpg hat_label_list.txt yolov5.rknn yolov5_hat.rknn

root@5c542f109d4c:/mnt/rknn_model_zoo-2.1.0/install/rk3588_linux_aarch64/rknn_yolov5_demo# cd ..

root@5c542f109d4c:/mnt/rknn_model_zoo-2.1.0/install/rk3588_linux_aarch64# ls

rknn_yolov5_demo rknn_yolov5_demo_hat_my.tar.gz

root@5c542f109d4c:/mnt/rknn_model_zoo-2.1.0/install/rk3588_linux_aarch64# rm -rf rknn_yolov5_demo_hat_my.tar.gz

root@5c542f109d4c:/mnt/rknn_model_zoo-2.1.0/install/rk3588_linux_aarch64# tar -zcvf rknn_yolov5_demo_try.tar.gz

tar: Cowardly refusing to create an empty archive

Try 'tar --help' or 'tar --usage' for more information.

root@5c542f109d4c:/mnt/rknn_model_zoo-2.1.0/install/rk3588_linux_aarch64# tar -zcvf rknn_yolov5_demo_try.tar.gz rknn_yolov5_demo/

rknn_yolov5_demo/

rknn_yolov5_demo/model/

rknn_yolov5_demo/model/hat.jpg

rknn_yolov5_demo/model/hat_label_list.txt

rknn_yolov5_demo/model/yolov5.rknn

rknn_yolov5_demo/model/yolov5_hat.rknn

rknn_yolov5_demo/lib/

rknn_yolov5_demo/lib/librknnrt.so

rknn_yolov5_demo/lib/librga.so

rknn_yolov5_demo/rknn_yolov5_demo

root@5c542f109d4c:/mnt/rknn_model_zoo-2.1.0/install/rk3588_linux_aarch64#

上机测试

root@elf2-desktop:~# tar -zxvf rknn_yolov5_demo_try.tar.gz

rknn_yolov5_demo/

rknn_yolov5_demo/model/

rknn_yolov5_demo/model/hat.jpg

rknn_yolov5_demo/model/hat_label_list.txt

rknn_yolov5_demo/model/yolov5.rknn

rknn_yolov5_demo/model/yolov5_hat.rknn

rknn_yolov5_demo/lib/

rknn_yolov5_demo/lib/librknnrt.so

rknn_yolov5_demo/lib/librga.so

rknn_yolov5_demo/rknn_yolov5_demo

root@elf2-desktop:~# cd rknn_yolov5_demo

root@elf2-desktop:~/rknn_yolov5_demo# ls

lib model rknn_yolov5_demo

root@elf2-desktop:~/rknn_yolov5_demo# chmod 777 rknn_yolov5_demo

root@elf2-desktop:~/rknn_yolov5_demo# ls

lib model rknn_yolov5_demo

root@elf2-desktop:~/rknn_yolov5_demo# ./rknn_yolov5_demo model/yolov5_hat.rknn model/hat.jpg

load lable ./model/hat_label_list.txt

model input num: 1, output num: 3

input tensors:

index=0, name=images, n_dims=4, dims=[1, 640, 640, 3], n_elems=1228800, size=1228800, fmt=NHWC, type=INT8, qnt_type=AFFINE, zp=-128, scale=0.003922

output tensors:

index=0, name=output0, n_dims=4, dims=[1, 21, 80, 80], n_elems=134400, size=134400, fmt=NCHW, type=INT8, qnt_type=AFFINE, zp=-128, scale=0.003922

index=1, name=367, n_dims=4, dims=[1, 21, 40, 40], n_elems=33600, size=33600, fmt=NCHW, type=INT8, qnt_type=AFFINE, zp=-128, scale=0.003922

index=2, name=369, n_dims=4, dims=[1, 21, 20, 20], n_elems=8400, size=8400, fmt=NCHW, type=INT8, qnt_type=AFFINE, zp=-128, scale=0.003922

model is NHWC input fmt

model input height=640, width=640, channel=3

origin size=640x640 crop size=640x640

input image: 640 x 640, subsampling: 4:2:0, colorspace: YCbCr, orientation: 1

scale=1.000000 dst_box=(0 0 639 639) allow_slight_change=1 _left_offset=0 _top_offset=0 padding_w=0 padding_h=0

src width=640 height=640 fmt=0x1 virAddr=0x0x25720530 fd=0

dst width=640 height=640 fmt=0x1 virAddr=0x0x2584c540 fd=0

src_box=(0 0 639 639)

dst_box=(0 0 639 639)

color=0x72

rga_api version 1.10.1_[0]

rknn_run

hat @ (130 31 517 513) 0.867

write_image path: out.png width=640 height=640 channel=3 data=0x25720530

root@elf2-desktop:~/rknn_yolov5_demo#

图13 测试结果图

电子发烧友论坛

电子发烧友论坛 /9

/9

淘帖

淘帖 11835

11835